MSA - AI With Memory Like An Elephant

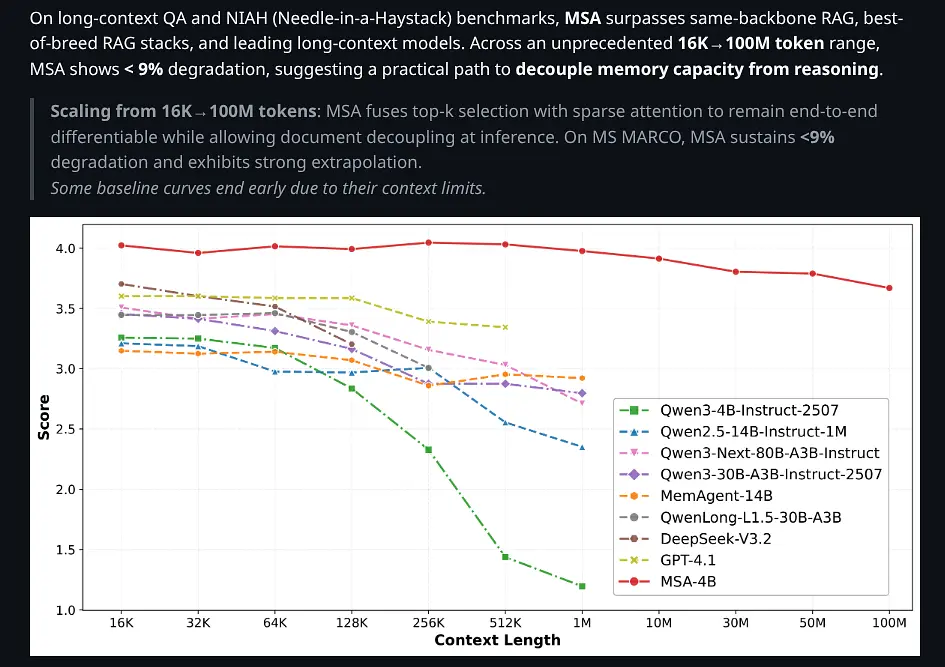

MSA or memory sparse attention, a new AI system that can remember 100 million tokens with less than 9% accuracy loss, current AI models forget everything after about 128,000 tokens.

MantraVid Admin

March 20, 2026

Why Does AI Forget Everything?

Here's a strange thought: your dog remembers more of your life than ChatGPT does.

Every walk you've taken together. The way you grab your keys. The sound of the door opening. Your dog builds up a rich, interconnected web of memories over years.

ChatGPT? It forgets everything after about 128,000 tokens. That's roughly a small novel. Ask it to summarize a conversation you had three hours ago, and it will politely tell you it can't. Because that information simply does not exist in its mind anymore.

This is not a bug. It is a fundamental architectural limitation. Transformers work like a game of telephone. Every piece of information has to pass through the same tiny bottleneck. The more you add, the more gets lost.

But what if it didn’t have to be this way?

A new paper from researchers at Evermind and Peking University proposes something radical. It is an AI that remembers everything. Not just 128,000 tokens. Not a million. But 100 million tokens. Roughly 1000 books, searchable in milliseconds.

They call it MSA or memory sparse attention, and it might be the most important AI breakthrough you have never heard of.

So Is This Real?

Let us be honest. The AI space is full of papers that promise the world and deliver a demo. So where does MSA actually land?

The researchers did not invent some brand-new fundamental technology. They have been clever about combining existing ideas in a new way. Think about how the iPhone was not inventing touchscreens or microprocessors, but putting them together in a way that changed everything.

What they actually figured out:

How to make an AI learn what to remember and what to forget, without being told explicitly

A trick involving how positions are encoded that lets the model train on 64,000 tokens but work perfectly with 100 million. Imagine teaching someone to find books in a small closet, and they can instantly scale to finding books in Amazon's warehouses

A way to store the important stuff on fast memory and the less important stuff on slower, cheaper memory. It is like having a desk for what you are working on right now and a filing cabinet for everything else

The realism is actually impressive. This is not just a theoretical paper. They trained on nearly 160 billion tokens. They demonstrate it running on just two GPUs. The 100 million token scale is not a simulation. It is an actual working system.

So: Is it revolutionary? Maybe, maybe not. Is it practical and clever and actually working? Absolutely.

How Does It Actually Work?

Imagine you have a friend named Alex who has an unusual gift. Alex can read an entire library, but only remembers the important parts.

Not everything. Alex does not memorize every word. Instead, Alex has developed an intuition for what matters. Every book, every article, every document. Alex instantly compresses it into a tiny summary. This book is about X, and the key insight is Y, and it is related to these other topics.

Now here is the really wild part. When you ask Alex a question, Alex does not have to re-read everything. Instead, Alex thinks: "Hmm, this question is about X. Let me pull up the summaries that are most relevant. Oh, this one from that book on physics, and this one from that research paper, and that one from a news article from three years ago."

In seconds, Alex retrieves the exact three to five compressed summaries most relevant to your question. And can answer with full context.

Now imagine Alex has done this for every document ever written. Every book. Every email. Every scientific paper. Every news article. A hundred million tokens worth. Roughly 50 million pages of text.

And Alex can do this lookup in milliseconds.

That is MSA.

Here is how it works:

Compression. Every document gets squished into a tiny summary. Not the full text, just the key information distilled down. Think of it like the executive summary at the top of a long email.

Routing. When you ask a question, the system does not search everything. It uses a router. This is a trained component that learns which compressed summaries are most likely relevant. It is like asking a question and having a perfect internal instinct for where that information lives.

Smart Storage. The most important summaries live in fast memory, which is GPU. The rest live in slower, cheaper memory, which is regular RAM. The system only loads what it needs, when it needs it.

The Position Trick. This is the clever bit. Normally, when you train an AI on short text and ask it to work with long text, it gets confused about positions. It is like someone who learned to navigate a small room being suddenly dropped into a warehouse. MSA solves this by giving each document its own mini GPS. Its own position system. So it does not matter how many documents there are.

The result is an AI that can handle 100 million tokens of context with less than 9% accuracy loss. Compare that to existing approaches, which start failing catastrophically around 128,000 tokens.

What Does This Mean?

This paper is a signal. Here is what it is telling us.

The context window race is winding down.

For the past two years, companies have been in an arms race. 32K, 128K, 1M, 2M tokens. It is impressive, but it is a dead end. You are still trying to shove everything through the same bottleneck. MSA represents a paradigm shift. Stop trying to hold everything. Start trying to remember smartly.

AI memory is the next frontier.

We are about to see a wave of AI products that remember you. Not in a creepy surveillance way. In a useful way. An AI assistant that remembers every meeting you have had. An AI analyst that knows your entire company. An AI tutor that adapts to how you learn.

The team behind MSA explicitly mentions Digital Twins as their goal. An AI that knows everything about you or your business, forever.

RAG is a transitional technology.

RAG is the current standard. You have a database, you search it, you add context. It is clever but clunky. It is like having to Google something mid-conversation. MSA is the difference between having to look it up and actually knowing it.

RAG will stick around for a while, but the writing is on the wall.

The privacy question is coming.

If AI can remember everything, what happens to privacy? What happens to the ability to start fresh? We are going to have to develop new norms around AI memory. What it means. What we consent to. What gets forgotten.

We are one step closer to something like AGI.

Full memory is a prerequisite for general intelligence. Humans do not just reason. We remember. We accumulate. We build on what we know. MSA is a significant step toward AI that can do the same.

What You Should Remember

MSA is not the end of AI memory research. It is a milestone. A proof of concept that shows what is possible when you stop fighting the bottleneck and start working with it.

But it also signals where the whole field is heading. Away from bigger context windows. Toward actual memory systems.

Your dog remembers you. Your partner remembers your anniversary. Your favorite barista remembers your order.

Now, finally, AI might too.

Related Posts

Multi-Token Prediction and the Reversal Reasoning Circuit

April 16, 2026 • 1 min read

Agentic Context Engineering (ACE): The Self-Improving Framework for LLM Contexts

March 26, 2026 • 10 min read

NVLink vs PCIe Parallelism on Blackwell RTX Pro GPUs: A Comprehensive Analysis

April 27, 2026 • 1 min read